I'm an incoming-4th year PhD student in the University of Wisconsin-Madison Computer Sciences departmentTop 15 graduate CS program; public research university working with Dr. Yuhang ZhaoAssistant Professor, HCI & Accessibility and her madAbility LabAccessibility research lab at UW-Madison. I study human-computer interaction (HCI), particularly extended reality (AR/VR/XR), accessibility (a11y), and AI-powered interactive systems to improve how people with disabilities access novel technology. I am also interested in how immersive video technologies (livestreams, 360° cameras, projection mapping) and computer graphics concepts (raytracing, real-time rendering) can be applied to fields like education, communication, esports, and healthcare.

Prior to Wisconsin, I achieved my Bachelor's of Science in Computer Science from The University of Texas at AustinTop 10 undergraduate CS program with certifications in Digital Arts & MediaUndergraduate intersectional program, 19 credits including capstone project and immersive technologiesGraduate program, interactive media and storytelling. Inaugural cohort. At Texas I worked with Amy PavelResearcher and Professor, HCI & Accessibility -- Now at UC Berkeley on livestream accessibility and Erin ReillyProfessor, Immersive Media and Storytelling & Lucy AtkinsonProfessor, Environmental Communication on multiple projects including AR for skin cancer prevention.

Outside of research, I test new products for HP HyperX and Dell Alienware. I also enjoy longboarding, running, backpacking, language learning, moderating online communities, and tracking the music I listen to on last.fm.

News

Featured Research

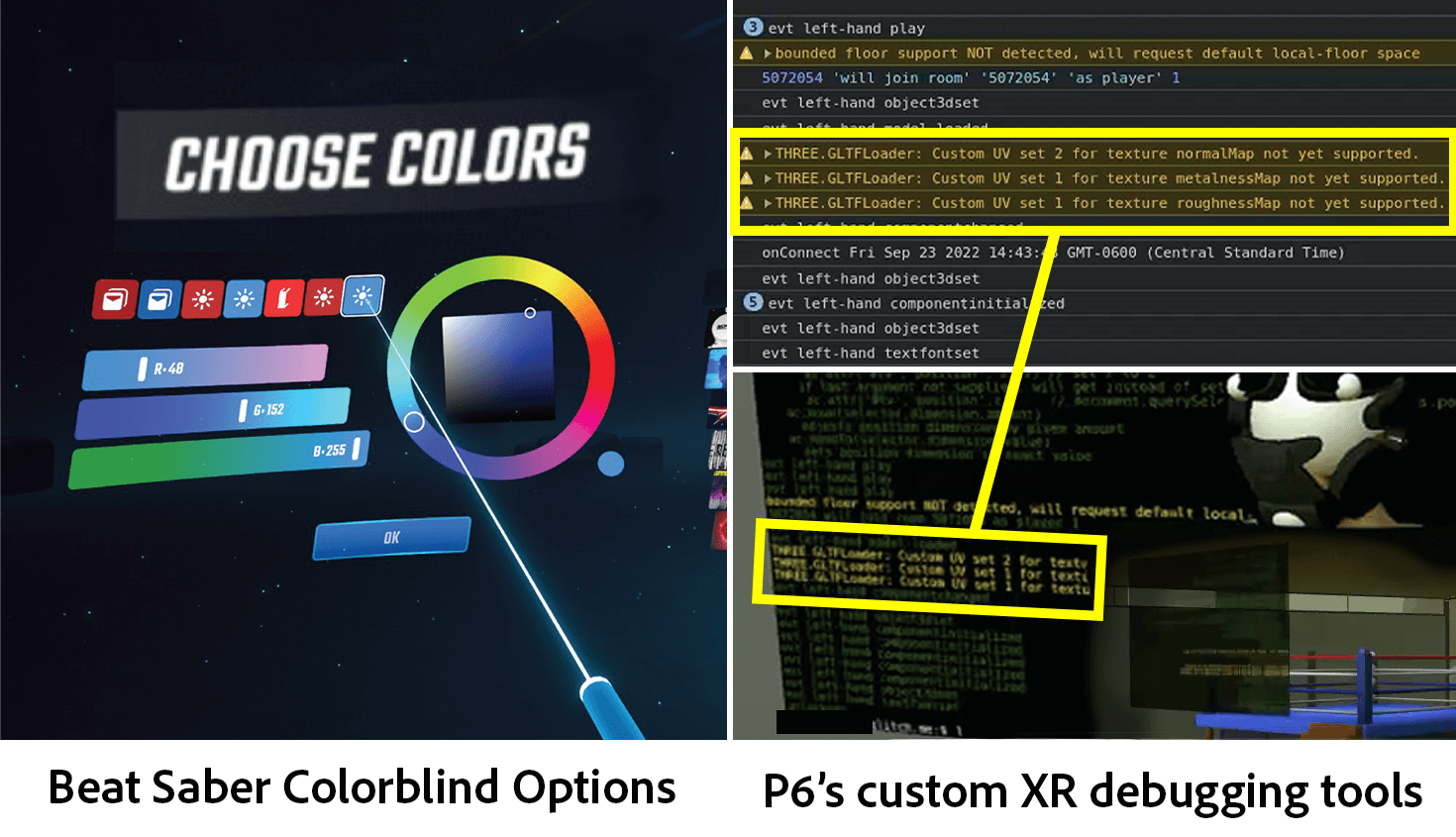

How Well Can 3D Accessibility Guidelines Support XR Development? An Interview Study with XR Practitioners in Industry

Daniel Killough, Tiger F. Ji, Kexin Zhang, Yaxin Hu, Yu Huang, Ruofei Du, Yuhang Zhao

Evaluating existing 3D accessibility guidelines with XR practitioners across different levels of industry and why they don't fully support XR development. Also see our work 'XR for All' for an extended version of this work, which includes additional perspectives on a11y development as a whole.

VRSight: AI-Driven Real-Time Scene Descriptions to Improve Virtual Reality Accessibility for Blind People

Daniel Killough, Justin Feng*, Zheng Xue "ZX" Ching*, Daniel Wang*, Rithvik Dyava*, Yapeng Tian, Yuhang Zhao

Using state-of-the-art object detection, zero-shot depth estimation, and multimodal large language models to identify virtual objects in social VR applications for blind and low vision people. *Authors 2, 3, 4, & 5 contributed equally to this work.

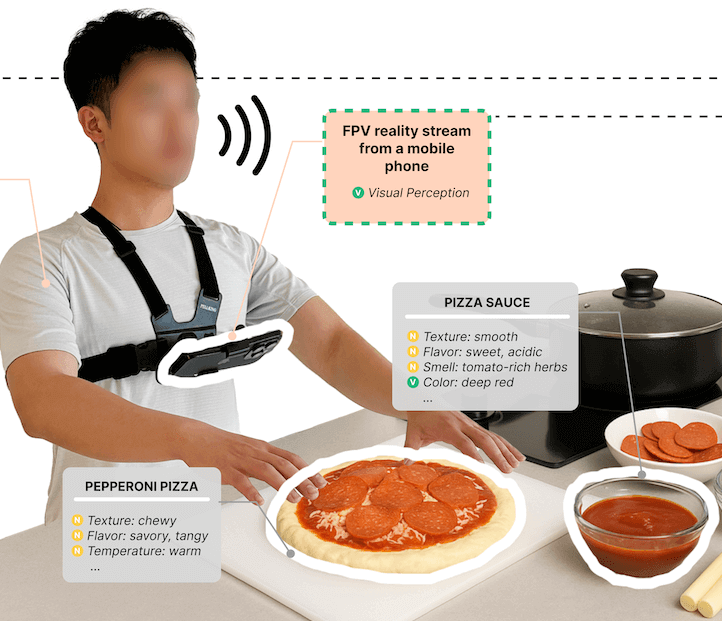

AROMA: Mixed-Initiative AI Assistance for Non-Visual Cooking by Grounding Multi-modal Information Between Reality and Videos

Zheng Ning, Leyang Li, Daniel Killough, JooYoung Seo, Patrick Carrington, Yapeng Tian, Yuhang Zhao, Franklin Mingzhe Li, Toby Jia-Jun Li

AI-powered cooking assistant using wearable cameras to help blind and low-vision users by integrating non-visual cues (touch, smell) with video recipe content. The system proactively offers alerts and guidance, helping users understand their cooking state by aligning their physical environment with recipe instructions.

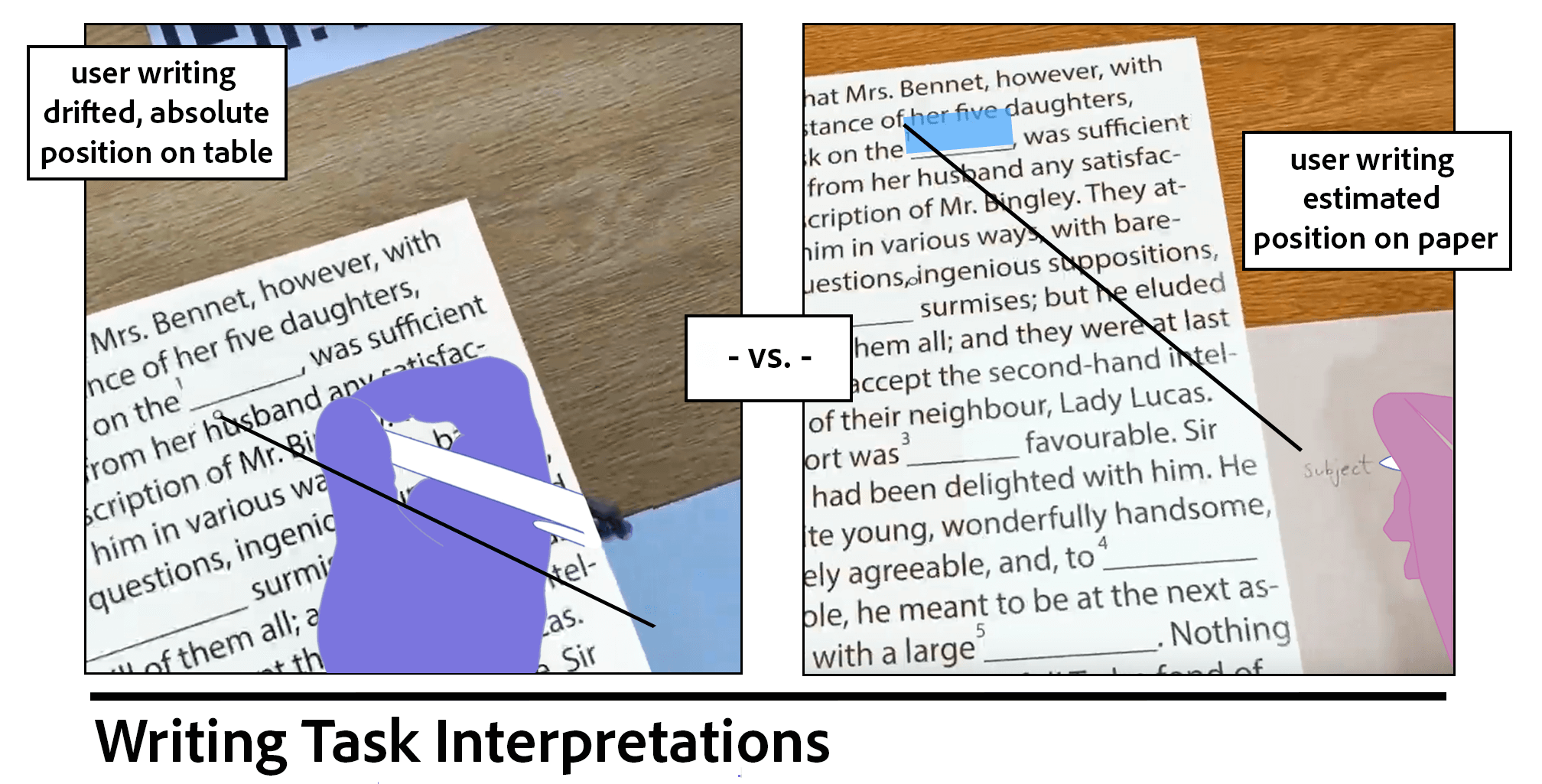

Understanding Mixed Reality Drift Tolerance

Daniel Killough*, Ruijia Chen*, Yuhang Zhao, Bilge Mutlu

Evaluating effects of mixed reality's tendency to drift objects on user perception and performance of task difficulty.

Exploring Community-Driven Descriptions for Making Livestreams Accessible

Daniel Killough, Amy Pavel

Making live video more accessible to blind users by crowdsourcing audio descriptions for real-time playback. Crowdsourced descriptions with 18 sighted community experts and evaluated with 9 blind participants.